A pipeline connects a source database to a destination and continuously streams changes. Once created, a pipeline handles initial backfills, schema evolution, and ongoing replication without manual intervention. Each pipeline consists of:Documentation Index

Fetch the complete documentation index at: https://artie.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

- Source reader - Connects to your source database and captures changes via CDC. A source reader can be shared across multiple pipelines so your database is only read once.

- Destination connector - Writes changes to a specific destination (e.g. Snowflake, BigQuery, S3).

Writing to multiple destinations

Artie supports reading from a single source and writing to multiple destinations in parallel - for example, streaming changes to both S3 and Snowflake simultaneously. This is configured by creating multiple pipelines that share the same source reader, so your source database is only read once regardless of how many destinations you have. This feature is currently available through Terraform. To set it up, createartie_source_reader resource and reference it across multiple artie_pipeline resources.

Pausing and resuming

You can pause a pipeline at any time from the pipeline overview page. Artie offers two pause modes:- Pause writing only - Artie stops writing to the destination but continues capturing changes from the source. Events accumulate in Kafka, so no data is lost. If you resume within 14 days, all queued changes are applied in order and no backfill is needed.

- Pause reading & writing - Artie stops both reading from the source and writing to the destination entirely. No new changes are captured while paused.

Autopilot

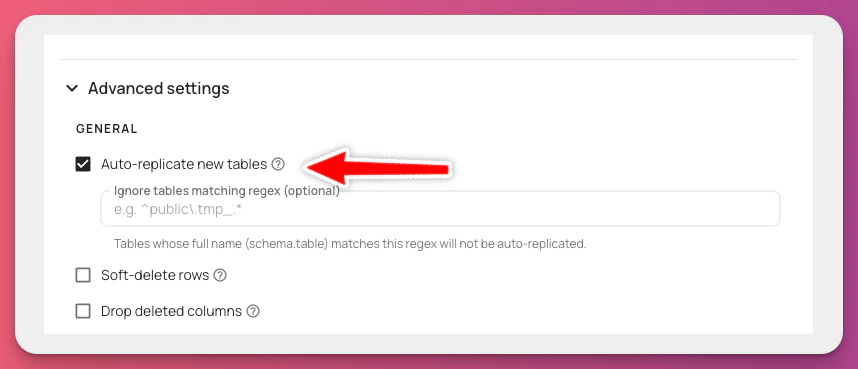

Autopilot is a feature that will automatically replicate new tables from your source database. To enable autopilot:- Go to the pipeline editor

- Click on the

Destinationtab - Click on the

Advanced settingstab - Enable

Auto-replicate new tables